Safety in Autonomous Driving explained in a nutshell (A-Z) – Today “B” like “Behavior Prediction”

By: Ole Harms

I will regularly share some quick glimpses on safety-related issues that matter while bringing Autonomous Driving (AD) onto the streets. Transparency is key here, and I want to contribute a bit by providing some short and easy-to-digest explanations.

Not necessarily in alphabetical order I will try to cover as many letters of the alphabet as possible. After I kicked this series off with ASIL today I want to continue with “Behavior Prediction”.

Simply spoken the core components of an Autonomous Driving system consist of

- Sensing & perception – the detecting of moving and static objects

- Situation interpretation incl. behavior prediction of moving objects

- Trajectory/ path planning – establishing the so-called driving plan, and finally

- Executing the driving plan (this includes the triggering of actuating elements & safety systems)

Predicting the behavior of other road users obviously is a core element to allow for safe decisions. It informs the decisions that determine the driving plan which in turn triggers certain actions like braking, evasion maneuvers, the definition of an optimized trajectory etc.

It’s agreed amongst all the experts that accurate prediction is one of the hardest problems in autonomous driving.

Behavior prediction is a complex thing as a lot of variables must be considered like e.g.

- Visible static and moving (dynamic) objects

- Nonvisible static or moving objects

- Road geometry

- Reduced sensor capabilities, e.g., in heavy rain, or

- Traffic rules

Just imagine, how different the behavior of vehicles would look like when driving through a certain intersection which in one case would have a yield sign, in another situation would be without a yield sign.

Further, it is obvious that behavior prediction cannot always rely on an “unobstructed view” as autonomous vehicles can only partially observe their surrounding world with their sensors e.g., due to object occlusion, limited sensor range, low sensor performance, or sensor noise (random variations in sensor output). Cellular V2X (Vehicle-to-everything) communication will certainly play an important role in the future to understand maneuver planning of vehicles in proximity as well as to “virtually look around the corner” because it directly connects cars with each other as well as with the infrastructure and further road users. But this will not be available everywhere.

Another constraint is restricted computational performance on-board the vehicle, mostly because of cost implications. Intelligent solutions to share computational resources among different functions are required here.

All the challenges mentioned above imply that in addition to rule-based systems deep learning/ machine learning is inevitable here. Before briefly touching this, I want to introduce a generic (and simplified) prediction scenario in Image 1 to provide some necessary context.

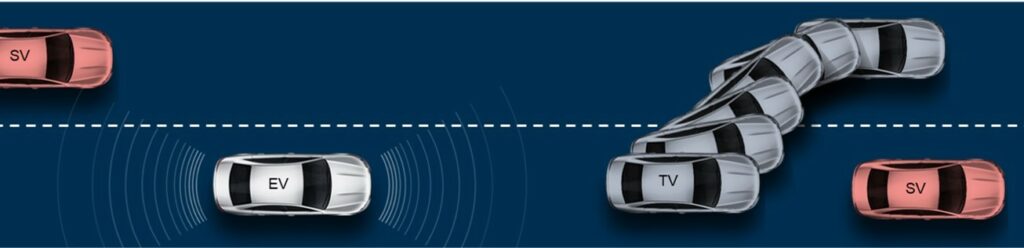

Here, the “Ego Vehicle (EV)” aims to predict the behavior of a dedicated “Target Vehicle (TV)”. “Surrounding Vehicles (SV)” are so close to the “Target Vehicle” that their behavior directly affects the behavior of the “Target Vehicle”. “Not Relevant Vehicles” are excluded from prediction as they are assumed to not influence the behavior of the “Target Vehicle”. It’s worth to mention that there are different approaches on how to classify “Not Relevant Vehicles” (called object selection), e.g., looking at a maximum number, their distance, or lane position.

It would certainly go way too far for an “in a nutshell” article to elaborate on the different deep learning approaches/ modules/ combinations, but I will try to explain the basic concept briefly in an as-simple-as-possible way.

Current and historic states (e.g., physics-based: positions, acceleration, velocity, deceleration) both of the “Target Vehicle” and “Surrounding Vehicles” are good starting points. But unfortunately, this can only be one part of the prediction model as it is fair to assume that not all “Surrounding Vehicles” in all their states can be directly observed, and only a very short timeframe could be predicted with this. So, in addition a deep neural network is to be fed e.g., with a simplified “Bird-Eye-View” – a 3D-based representation of the environment – and input from other sensors that provide all available data of the surrounding. With this could e.g., effects from maneuvers or interactions between vehicles be considered.

To give you a glimpse of what a “deep neural network” is: The human brain basically served as the inspiration for the creation of a neural network. Machine learning algorithms created by developers with the aim to recognize patterns lay the foundation for it. A neural network is called “deep” if it does not only work according to the algorithms defined but is able to come up with conclusions and decisions based upon its previous experience. It consists of nodes that resemble neurons of the human brain which are grouped into many different layers. Each layer of nodes trains on a distinct set of features based on the previous layer’s output. A deep neural network learns how to solve its classification tasks e.g., from vision data, acoustic data, or text.

Output of this complex computation then are basically – and very again simplified – the maneuver intention of the “Target Vehicle” and the so-called trajectory prediction, which describes the likely future behavior of it. For this purpose, a series of future locations of the “Target Vehicle” over a certain time window is getting predicted. Image 2 tries to visualize the very basic concept. In reality, behavior prediction would of course consider all safety-relevant road users, especially looking at their vulnerability.

Based upon the prediction results then the driving/ trajectory plan for the “Ego Vehicle” is established. This is another complex thing in itself (e.g., determining the maximum timeframe a vehicle can safely follow a trajectory without an emergency maneuver) und thus well may be a topic for a different “safety in a nutshell” article.

I hope I could bring across that correctly predicting the future actions of all (dynamic) road users is not only the necessary pre-requisite for autonomous cars to execute the right maneuvers at the right time. It’s certainly also one of the hardest challenges to solve to ensure safety in self-driving cars. This will keep us busy at VAIVA throughout the next years as well as everybody else in this space.

Because of this a couple of AV companies (e.g., Argo, Waymo) have released open datasets lately with hundreds of thousands vehicle trajectories and certain agents (e.g., cyclists, pedestrians) interactions which have been collected throughout thousands of hours of driving. Ambition is to accelerate research in this very field and to train and develop models that can capture complex behaviors.